- 8162 Views

- 9 replies

- 2 kudos

How to run test of default python asset bundle, how run from terminal in general

I created an asset bundle from default python template. I am able to "upload and run file" main.py from within vs-code: 5/23/2025, 9:01:29 AM - Uploading assets to databricks workspace... 5/23/2025, 9:01:31 AM - Creating execution context on cluster ...

- 8162 Views

- 9 replies

- 2 kudos

- 2 kudos

I figured it out from the docs:https://learn.microsoft.com/en-us/azure/databricks/dev-tools/databricks-connect/cluster-config#clusterI don´t put the cluster in code myself because all code runs in Jobs (on job clusters). I only use it for developmen...

- 2 kudos

- 1386 Views

- 1 replies

- 1 kudos

Efficiently Delete/Update/Insert Large Datasets of Records in PostgreSQL from Spark DataFrame

So I am migrating my ETL Process from Pentaho to Databricks I am using pyspark.I have posted all the details here Staging Ground: How to Insert,Update,Delete data using databricks for large records in PostgreSQL from Spark DataFrame - Stack OverflowC...

- 1386 Views

- 1 replies

- 1 kudos

- 1 kudos

Hello @skohade1! The link you provided isn't accessible. Could you please share the relevant details directly here? This will help Community members better understand your scenario.

- 1 kudos

- 757 Views

- 1 replies

- 0 kudos

- 757 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @mahima13! Could you please share a bit more about the issue? Are you seeing an error when trying to join, or are you unable to locate the session link?

- 0 kudos

- 1684 Views

- 2 replies

- 0 kudos

Databricks Billing support

Hi everyone,I dont know if this is the right channel to use for this type of query but I couldn't get Databricks billing support from anywhere, which is why I am posting here. I am trying to contact Databricks sales team or billing support but I am u...

- 1684 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @brycejune, I dont have the support plan, I was only exploring managed MLflow provided by Databricks. Help center however did assign me a Databricks account executive. Hopefully, this might help me with billing.

- 0 kudos

- 1333 Views

- 1 replies

- 0 kudos

Resolved! Kryterion suspended my certification exam

Hi Team,I have given the Databricks Certified Associate Developer for Apache Spark - Python Exam on18-05-2025. I was continuously in front of the camera and an alert came and the proctor asked me to show the entire room and bed. And I have shown him ...

- 1333 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @vamsikrishna880 Please consider removing your webassessor ID from this post. We handle tickets via our ticket system so that individuals do not need to post their personal contact information in this forum.

- 0 kudos

- 8059 Views

- 10 replies

- 2 kudos

Variables in databricks.yml "include:" - Asset Bundles

HI,We've got an app that we deploy to multiple customers workspaces. We're looking to transition to asset bundles. We would like to structure our resources like: -src/ -resources/ |-- customer_1/ |-- job_1 |-- job_2 |-- customer_2/ |-- job_...

- 8059 Views

- 10 replies

- 2 kudos

- 2 kudos

@_escoto You can achieve this by defining the entire job in the "targets" section for your development target. Resources defined in the top-level "resources" block are deployed to all targets. Resources defined both in the top-level "resources" block...

- 2 kudos

- 841 Views

- 1 replies

- 0 kudos

Data engineer Associate voucher

Hi all, I am new to this databricks and want to learn and do certification for my job search. Is there any free vouchers something like that for exams?

- 841 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @bindu22! Currently, Databricks isn’t offering any Certification discount promotions, but Learning Festival events are held once per quarter — in January, April, July, and October. Participants receive a 50% off voucher. Details for the next Le...

- 0 kudos

- 4685 Views

- 3 replies

- 0 kudos

Databricks Apps is now available in Public Preview

Databricks Apps is a new way to build and deploy internal data and AI applications is now available in Public Preview.Databricks Apps let developers build native apps using frameworks like Dash, Shiny and Streamlit, enabling data applications for non...

- 4685 Views

- 3 replies

- 0 kudos

- 0 kudos

Databricks Apps is Generally Available as of May 13, 2025. Here is the link to the documentation.

- 0 kudos

- 1598 Views

- 1 replies

- 0 kudos

Databricks Bundle Deployment Question

Hello, everyone! I’ve been working on deploying Databricks bundles using Terraform, and I’ve encountered an issue. During the deployment, the Terraform state file seems to reference resources tied to another user, which causes permission errors.I’ve ...

- 1598 Views

- 1 replies

- 0 kudos

- 0 kudos

Hey @leandro09 ,My opinion this kind of error is often related to Terraform state still tracking a resource that was created or managed by another user, and your current user doesn’t have permissions to update or read it.terraform state listThis will...

- 0 kudos

- 1215 Views

- 1 replies

- 0 kudos

Resolved! My Databrick exam got Suspended.

Hello Databrick Support Team, request ID: #00667456I faced issue while taking my Databrick Certified Associate Developer for Apache Spark exam. This is completely unfair that alerts are coming while taking mid of exam due to blinking of eyes and proc...

- 1215 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @Siddartha01! It looks like this post duplicates the one you recently posted. A response has already been provided to the Original thread. I recommend continuing the discussion in that thread to keep the conversation focused and organised.

- 0 kudos

- 5024 Views

- 1 replies

- 0 kudos

Unable to create a folder inside DBFS on Community Edition

Im using the Community Edition.Trying to create a storage folder inside DBFS -> Filestore for my datasets. I click on Create, give a folder name, and poof. Nothing. No new folder.Tried refreshing, logging out and logging in. Tried to create folder mu...

- 5024 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi Sachin, For DBFS V1 used in Community Edition, there may be limitations regarding the creation of folders or changes to file structures, particularly under specific paths such as /FileStore, which is commonly used to store datasets, plots, and oth...

- 0 kudos

- 2715 Views

- 3 replies

- 0 kudos

Resolved! Does Unity Catalog support Iceberg?

Hi, I came across this post on querying an AWS Glue table in S3 from Databricks using Unity Catalog: https://medium.com/@sidnahar13/aws-glue-meets-unity-catalog-effortless-data-governance-querying-in-databricks-5138f1e574a4. The post says support for...

- 2715 Views

- 3 replies

- 0 kudos

- 0 kudos

As of now, Databricks does not support non-Spark methods (such as direct REST API calls, JDBC/ODBC connections outside Spark, or other query engines within Databricks) to query S3 Tables directly. Unity Catalog and Databricks’ native cataloging foc...

- 0 kudos

- 15899 Views

- 12 replies

- 13 kudos

Resolved! Uploading local file

Since, last two day i getting an error called "ERROR OCCURRED WHEN PROCESSING FILE:[OBJECT OBJECT]" While uploading any "csv" or "json" file from my local system but it shows or running my previous file but give error after uploading a new file

- 15899 Views

- 12 replies

- 13 kudos

- 13 kudos

If you are using databricks community edition, the error you are facing is because the file you are trying to upload contains PII or SPII data ( Personally Identifiable Information OR Sensitive Personally Identifiable Information) words like dob, To...

- 13 kudos

- 4869 Views

- 3 replies

- 5 kudos

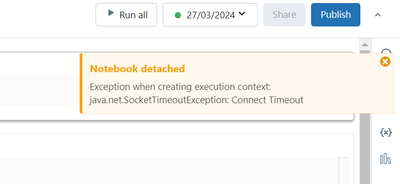

Getting java.util.concurrent.TimeoutException: Timed out after 15 seconds on community edition

Im using databricks communtiy edition for learning purpose and im whenever im running notebook, im getting:Exception when creating execution context: java.util.concurrent.TimeoutException: Timed out after 15 seconds databricks.I have deleted cluster ...

- 4869 Views

- 3 replies

- 5 kudos

- 5 kudos

@stevieg95 The issue is that, you've run the notebook with old connector connected to your old deleted cluster with same names, when you ran a terminated cluster, you see the error. First delete existing cluster and logout and detach old cluster as b...

- 5 kudos

- 3232 Views

- 6 replies

- 0 kudos

Issue with Multiple Stateful Operations in Databricks Structured Streaming

Hi everyone,I'm working with Databricks structured streaming and have encountered an issue with stateful operations. Below is my pseudo-code: df = df.withWatermark("timestamp", "1 second") df_header = df.withColumn("message_id", F.col("payload.id"))...

- 3232 Views

- 6 replies

- 0 kudos

- 0 kudos

This should according to this blog post basically work, right? However, I'm getting the same errorMultiple Stateful Streaming Operators | Databricks BlogOr am I missing something? rate_df = spark.readStream.format("rate").option("rowsPerSecond", "1")...

- 0 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

Business Intelligence

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

CommunityArticle

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Dashboards

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Apps

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

Devops

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

fastapi

1 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

2 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 143 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |