- 2728 Views

- 2 replies

- 0 kudos

Azure Databricks - Databricks AI Assistant missing on Azure Student Subscription?

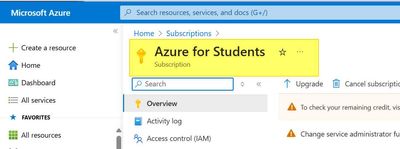

I am going through a course learning Azure Databricks and I had created a new Azure Databricks Workspace. I am the owner of the subscription and created everything so I assume I should have full admin rights.The following is my set up-Azure Student S...

- 2728 Views

- 2 replies

- 0 kudos

- 0 kudos

@rodneyc8063 According to Azure’s documentation, it states:Tip:Admins: If you’re unable to enable Databricks Assistant, you might need to disable the "Enforce data processing within workspace Geography for Designated Services" setting. See “For an ac...

- 0 kudos

- 1037 Views

- 1 replies

- 0 kudos

Resolved! Access to Demo: Databricks Workspace Walkthrough

Hi,How can I have access to Databricks Workspace Walkthrough hands-on (guidance) in free courses to practice?Thanks

- 1037 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello @MariaSaa! The free Databricks courses don’t include access to hands-on labs. Practical lab environments are currently available only through paid training options or with an Academy Labs subscription.

- 0 kudos

- 1715 Views

- 3 replies

- 0 kudos

Allow an external user to query SQL table in Databricks

I have a delta table sitting in a schema inside a catalog. How do I allow an external user to query the SQL table via an API? I scrolled through documentation and a lot of resources but it's all so confusing. The AI assistant is way too naive. Can so...

- 1715 Views

- 3 replies

- 0 kudos

- 0 kudos

We will need a bit more information. Are you asking: A) how an external user who is skilled at code can invoke a sql query via api. or B) how a non-technical external user can run a query via a simple ui?If it's option A: then you can create a person...

- 0 kudos

- 4462 Views

- 1 replies

- 0 kudos

Install bundle built artifact as notebook-scoped library

We are having a hard time finding an intuitive way of using the artifacts we build and deploy with databricks bundle deploy notebook-scoped.Desired result:Having internal artifacts be available notebook-scoped for jobs by configorHaving an easier way...

- 4462 Views

- 1 replies

- 0 kudos

- 0 kudos

We were not able to find a clean solution for this, so what we ended up doing is referencing the common lib like this in every notebook it is needed.%pip install ../../../artifacts/.internal/common-0.1-py3-none-any.whl

- 0 kudos

- 1594 Views

- 2 replies

- 0 kudos

Autoloader delete action on AWS S3

Hi folks, I have been using autoloader to ingest files from S3 bucket.I tried to add trigger on the workflows to schedule the job to run every 10 minutes. However, recently I'm facing an error that makes the jobs keep failing after a few success run....

- 1594 Views

- 2 replies

- 0 kudos

- 0 kudos

There might be few possibilities : can you check this items ? 1. Is there any s3 bucket policy configured like within timeframe file deletion or file validity configured ? 2. check the autload configuration once again to validate the option of cleanu...

- 0 kudos

- 1177 Views

- 0 replies

- 0 kudos

Time Series Book by a Senior Solutions Architect

I recently worked with one of the Senior Solutions Architects, Yoni Ramaswami (https://www.linkedin.com/in/yoni-r/) with Databricks on a new book" Time Series Analysis with Spark" Key FeaturesQuickly get started with your first models and explore the...

- 1177 Views

- 0 replies

- 0 kudos

- 1712 Views

- 1 replies

- 0 kudos

Unable pass array of tables names from for each and send it task param

sending below array list from for each task["mv_t005u","mv_t005t","mv_t880"] In the task , iam reading value as key :mv_namevalue :{{input}} but in note book i am getting below errorNote book code:%sqlREFRESH MATERIALIZED VIEW nonprod_emea.silver_loc...

- 1712 Views

- 1 replies

- 0 kudos

- 0 kudos

Hi @sivaram_mandepu,In the first screenshot, the input must be a valid JSON array, so instead of using {{mvname: "mv_......"}}, update it to [ { "mvname": "mv_......." } ].In the third screenshot, the SQL error likely comes from a newline or extra sp...

- 0 kudos

- 3090 Views

- 3 replies

- 0 kudos

Resolved! Init Scripts Error When Deploying a Delta Live Table Pipeline with Databricks Asset Bundles

Hello everyone,Let me give you some context. I am trying to deploy a Delta Live Table pipeline using Databricks Asset Bundles, which requires a private library hosted in Azure DevOps.As far as I understand, this can be resolved in three ways:Installi...

- 3090 Views

- 3 replies

- 0 kudos

- 0 kudos

I detected the error; it was due to the path defined in the bundle where the init script was located.I'm closing the post.

- 0 kudos

- 9483 Views

- 2 replies

- 0 kudos

FAILED_READ_FILE.NO_HINT error

We read data from csv in the volume into the table using COPY INTO. The first 200 files were added without problems, but now we are no longer able to add any new data to the table and the error is FAILED_READ_FILE.NO_HINT. The CSV format is always th...

- 9483 Views

- 2 replies

- 0 kudos

- 0 kudos

I came across the same issue and the file causing problems needed the csv option "multiline" set back to the default "false" to read the file:df = spark.read.option("multiline", "false").csv("CSV_PATH") If this approach eliminates the error above, I ...

- 0 kudos

- 1428 Views

- 2 replies

- 1 kudos

webterm unminimize command missing?

A lot of commands in webterm basically tell you a bunch of stuff has been not installed or minimized and you should run `unminimize` for a full interactive experience.This used to work great. However, I just tried it and the unminimize command is no...

- 1428 Views

- 2 replies

- 1 kudos

- 1 kudos

1. no such command exists2. probably not - we tend to dump old clusters and create new ones (for new sets of data) fairly frequently and (I think) use the latest stable DBR when creating3. I did find a workaround. unminimize has been added to apt so...

- 1 kudos

- 1974 Views

- 2 replies

- 0 kudos

Resolved! Change Data Feed And Column Masks

Hi there,Wondering if anyone can help me. I have had a job set up to stream from one change data feed enabled delta table to another delta table and has been executing successfully. I then added column masks to the source table from which I am stream...

- 1974 Views

- 2 replies

- 0 kudos

- 0 kudos

Hello Mate,Hope doing great,you can configure a service principle in that case, add proper roles as per needs and use as run owner. Re_run the stream so that your PII will not be able to display to other teams/persons until having the member. Simple ...

- 0 kudos

- 2532 Views

- 2 replies

- 1 kudos

Resolved! Databricks AI + Data Summit discount coupon

Hi Community,I hope you're doing well.I wanted to ask you the following: I want to go to Databricks AI + Data Summit this year, but it's super expensive for me. And hotels in San Francisco, as you know, are super expensive.So, I wanted to know how I ...

- 2532 Views

- 2 replies

- 1 kudos

- 1821 Views

- 1 replies

- 0 kudos

Can we get the branch name from Notebook

Hi Folks,Is there a way to display the current git branch name from Databricks notebook Thanks

- 1821 Views

- 1 replies

- 0 kudos

- 0 kudos

Yes, you can display the current git branch name from a Databricks notebook in several ways: Using the Databricks UI The simplest method is to use the Databricks UI, which already shows the current branch name:- In a notebook, look for the button nex...

- 0 kudos

- 1108 Views

- 1 replies

- 0 kudos

DLT Pipeline

Hello, I have written below simple code to write data to Catalogue table using simple DLT pipeline .As part of Below program am reading a file from blob container and trying to write to a Catalogue table . New catalogue table got created but table d...

- 1108 Views

- 1 replies

- 0 kudos

- 0 kudos

The issue with your DLT pipeline is that you've defined the table and schema correctly, but you haven't actually implemented the data loading logic in your `ingest_from_storage()` function. While you've created the function, you're not calling it any...

- 0 kudos

- 1372 Views

- 1 replies

- 0 kudos

How to get the hadoopConfiguration in a unity catalog standard access mode app ?

Context:job running using a job clustered configured in Standard access mode ( Shared Access mode )scala 2.12.15 / spark 3.5.0 jar programDatabricks runtime 15.4 LTSIn this context, it is not possible to get the sparkSession.sparkContext, as confirme...

- 1372 Views

- 1 replies

- 0 kudos

- 0 kudos

In Unity Catalog standard access mode (formerly shared access mode) with Databricks Runtime 15.4 LTS, direct access to `sparkSession.sparkContext` is restricted as part of the security limitations. However, there are still ways to access the Hadoop c...

- 0 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

Devops

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 143 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |