- 1831 Views

- 2 replies

- 0 kudos

JDBC Driver cannot connect when using TokenCachePassPhrase property

Hello all, I'm looking for suggestions on enabling the token cache when using browser based SSO login. I'm following the instructions found here: Databricks-JDBC-Driver-Install-and-Configuration-Guide For my users, I would like to enable the token ca...

- 1831 Views

- 2 replies

- 0 kudos

- 0 kudos

For the error encountered (Cannot invoke "java.nio.file.attribute.AclFileAttributeView.setAcl(...)" because "<local6>" is null) might be permission or file system issues where the token cache store is being accessed. When EnableTokenCache=0, the to...

- 0 kudos

- 5608 Views

- 3 replies

- 1 kudos

Databricks Notebook says "Connecting.." for some users

For some users, after clicking on a notebook the screen says "connecting..." and the notebook does not open.The users are using Chrome browser and the same happens with Edge as well.What could be the reason?

- 5608 Views

- 3 replies

- 1 kudos

- 1 kudos

Even I am facing the same issue. It always keep saying, opening the notepad. Luckily once it is opened and when connected with the cluster, then its getting timeout.

- 1 kudos

- 4822 Views

- 8 replies

- 1 kudos

Notebook Detached Error: exception when creating execution context: java.net.SocketTimeoutException:

Hello Community,I have been facing this issue since yesterday. After attaching the cluster to a notebook and running a cell, I get the following error in the community edition of the databricks:Notebook detached:exception when creating execution cont...

- 4822 Views

- 8 replies

- 1 kudos

- 1 kudos

Hi All,I am also facing issue.if anyone knows how to resolve this, please post the solution here.

- 1 kudos

- 4018 Views

- 7 replies

- 0 kudos

preloaded_docker_images: how do they work?

At my org, when we start a databricks cluster, it oftens takes awhile to become available (due to (1) instance provisioning, (2) library loading, and (3) init script execution). I'm exploring whether an instance pool could be a viable strategy for im...

- 4018 Views

- 7 replies

- 0 kudos

- 0 kudos

Hello, when we specify docker image with credentials in instance pool configuration, should we also specify credentials in cluster configuration?. as we already have image pulled into the pool instance.

- 0 kudos

- 4525 Views

- 2 replies

- 0 kudos

Is there an automated way to strip notebook outputs prior to pushing to github?

We have a team that works in Azure Databricks on notebooks.We are not allowed to push any data to Github per corporate policy.Instead of everyone having to always remember to clear their notebook outputs prior to commit and push, is there a way this ...

- 4525 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi,pushing to GitHub isn’t allowed, but clearing notebook outputs before internal version control is still important, you can automate this process by using a pre-commit hook or a script within your internal CI/CD pipeline (if one exists). Tools like...

- 0 kudos

- 3156 Views

- 3 replies

- 1 kudos

Replacing Excel with Databricks

I have a client that currently uses a lot of Excel with VBA and advanced calculations. Their source data is often stored in SQL Server.I am trying to make the case to move to Databricks. What's a good way to make that case? What are some advantages t...

- 3156 Views

- 3 replies

- 1 kudos

- 1 kudos

To add on this, my team and I have been using Databricks in an enterprise environment to replace Excel based calculation relying on SQL stored data with Calculations served as model serving endpoints (API) - the initial 'translation' work can be tedi...

- 1 kudos

- 1394 Views

- 2 replies

- 1 kudos

Resolved! Search page to search code inside .py files

Hello, hope you are doing good.When on the search page, it seems it's not searching for code inside .py files but rather only the filename.Is there an option somewhere I'm missing to be able to search inside .py files ? Best,Alan

- 1394 Views

- 2 replies

- 1 kudos

- 1 kudos

Hello, so it seems Databricks does not allow it - an easy workaround for us is to search directly on our Azure DevOps Repos.

- 1 kudos

- 1483 Views

- 1 replies

- 0 kudos

COMMUNITY EDITION CLUSTER DETACH JAVA.UTIL.TIMEOUTEXCEPTION.

Hi folks i was exploring the databricks community edition and came across a cluster issue mostly because of jdbc driver java.util.timeoutexception . basically the cluster connects and executes for 15 sec or so which is a socket limit and disables any...

- 1483 Views

- 1 replies

- 0 kudos

- 0 kudos

@Kishore23 paturnpikewrote:Hi folks i was exploring the databricks community edition and came across a cluster issue mostly because of jdbc driver java.util.timeoutexception . basically the cluster connects and executes for 15 sec or so which is a so...

- 0 kudos

- 52248 Views

- 11 replies

- 1 kudos

Error: Folder xxxx@xxx.com is protected

Hello, On Azure Databricks i'm trying to remove a folder on the Repos folder using the following command : databricks workspace delete "/Repos/xxx@xx.com"I got the following error message:databricks workspace delete "/Repos/xxxx@xx.com"Error: Folder ...

- 52248 Views

- 11 replies

- 1 kudos

- 1 kudos

Hello Databricks Forums,When you see the Azure Databricks error message "Folder xxxx@xxx.com is protected," it means that you are attempting to remove a system-protected folder, which is usually connected to a user's workspace, particularly under the...

- 1 kudos

- 1017 Views

- 1 replies

- 0 kudos

dev and prod

"SELECT * FROM' data call on my table in PROD is giving all the rows of data, but a call on my table in DEV is giving me just one row of data. what could be the problem??

- 1017 Views

- 1 replies

- 0 kudos

- 0 kudos

Tell us more about your environment. Are you using Unity Catalog? What is the table format? What cloud platform are you on? More information is needed.

- 0 kudos

- 1268 Views

- 2 replies

- 0 kudos

Will auto loader read files if it doesn't need to?

I want to run auto loader on some very large json files. I don't actually care about the data inside the files, just the file paths of the blobs. If I do something like``` spark.readStream .format("cloudFiles") .option("cloudFiles.fo...

- 1268 Views

- 2 replies

- 0 kudos

- 0 kudos

Hi @charliemerrell Yes, Databricks will still open and parse the JSON files, even if you're only selecting _metadata.It must infer schema and perform basic parsing, unless you explicitly avoid it.So, even if you do:.select("_metadata")It doesn't skip...

- 0 kudos

- 1409 Views

- 2 replies

- 1 kudos

Enroll, Learn, Earn Databricks !!

Hello Team,I had attended the session in CTS Manyata on 22nd April. I am interested in pursuing for the certifications but while enrolling it shows you are not a member of any group.Link for the available certifications and courses: https://community...

- 1409 Views

- 2 replies

- 1 kudos

- 1 kudos

Hi @samgupta88 you can find it on the partner academy. Everything is listed in the partner portal.

- 1 kudos

- 1405 Views

- 4 replies

- 0 kudos

UCX Installation error

Error Message: databricks.sdk.errors.platform.ResourceDoesNotExist: Can't find a cluster policy with id: 00127F76E005AE12.

- 1405 Views

- 4 replies

- 0 kudos

- 0 kudos

Click into each policy in the Compute UI of the Workspace to see if the policy ID exists. If it does, then the account that invoked the SDK method didn't have workspace admin permissions.

- 0 kudos

- 2099 Views

- 3 replies

- 0 kudos

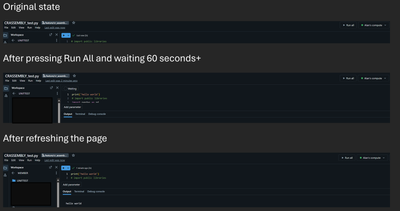

Resolved! .py file running stuck on waiting

Hello, hope you are doing well.We are facing an issue when running .py files. This is fairly recent and we were not experiencing this issue last week.As shown in the screenshots below, the .py file hangs on "waiting" after we press "run all". No matt...

- 2099 Views

- 3 replies

- 0 kudos

- 0 kudos

Hello, thanks a lot for your answer.We were getting the required permissions to use Firefox in our org, but in the meantime it seemed it worked again in Edge when it updated to version 135.0.3179.85 (Official build) (64-bit).

- 0 kudos

- 9368 Views

- 2 replies

- 2 kudos

Resolved! requirements.txt with cluster libraries

Cluster libraries are supported from version 15.0 - Databricks Runtime 15.0 | Databricks on AWS.How can I specify requirements.txt file path in the libraries in a job cluster in my workflow? Can I use relative path? Is it relative from the root of th...

- 9368 Views

- 2 replies

- 2 kudos

- 2 kudos

how to install requirement.txt using github action.- name: Install workspace requirements.txt on clusterenv:CLUSTER_ID: ${{ secrets.DATABRICKS_CLUSTER_ID }}run: |databricks libraries install \--cluster-id "$CLUSTER_ID" \--whl "dbfs:/FileStore/enginee...

- 2 kudos

-

.CSV

1 -

Access Data

2 -

Access Databricks

3 -

Access Delta Tables

2 -

Account reset

1 -

adcAws databricks

1 -

ADF Pipeline

1 -

ADLS Gen2 With ABFSS

1 -

Advanced Data Engineering

2 -

AI

5 -

Analytics

1 -

Apache spark

1 -

Apache Spark 3.0

1 -

api

1 -

Api Calls

1 -

API Documentation

4 -

App

2 -

Application

2 -

Architecture

1 -

asset bundle

1 -

Asset Bundles

3 -

Auto-loader

1 -

Autoloader

4 -

Aws databricks

1 -

AWS security token

1 -

AWSDatabricksCluster

1 -

Azure

7 -

Azure data disk

1 -

Azure databricks

16 -

Azure Databricks Delta Table

1 -

Azure Databricks Job

1 -

Azure Databricks SQL

6 -

Azure databricks workspace

1 -

Azure Unity Catalog

6 -

Azure-databricks

1 -

AzureDatabricks

1 -

AzureDevopsRepo

1 -

best practices

1 -

Big Data Solutions

1 -

Billing

1 -

Billing and Cost Management

2 -

Blackduck

1 -

Bronze Layer

1 -

Business Intelligence

1 -

CDC

1 -

Certification

3 -

Certification Exam

1 -

Certification Voucher

3 -

CICDForDatabricksWorkflows

1 -

Cloud_files_state

1 -

CloudFiles

1 -

Cluster

3 -

Cluster Init Script

1 -

Comments

1 -

Community Edition

4 -

Community Edition Account

1 -

Community Event

1 -

Community Group

2 -

Community Members

1 -

Community site

1 -

CommunityArticle

1 -

Compute

3 -

Compute Instances

1 -

conditional tasks

1 -

Connection

1 -

Contest

1 -

Credentials

1 -

csv

1 -

Custom Python

1 -

CustomLibrary

1 -

Data

1 -

Data + AI Summit

1 -

Data Engineer Associate

1 -

Data Engineering

4 -

Data Explorer

1 -

Data Governance

1 -

Data Ingestion & connectivity

1 -

Data Ingestion Architecture

1 -

Data Processing

1 -

Databrick add-on for Splunk

1 -

databricks

4 -

Databricks Academy

1 -

Databricks AI + Data Summit

1 -

Databricks Alerts

1 -

Databricks App

1 -

Databricks Assistant

1 -

Databricks autoloader

1 -

Databricks Certification

1 -

Databricks Cluster

2 -

Databricks Clusters

1 -

Databricks Community

10 -

Databricks community edition

3 -

Databricks Community Edition Account

1 -

Databricks Community Rewards Store

3 -

Databricks connect

1 -

Databricks Dashboard

3 -

Databricks delta

2 -

Databricks Delta Table

2 -

Databricks Demo Center

1 -

Databricks Documentation

4 -

Databricks genAI associate

1 -

Databricks JDBC Driver

1 -

Databricks Job

1 -

Databricks Lakeflow

1 -

Databricks Lakehouse Platform

6 -

Databricks Migration

1 -

Databricks Model

1 -

Databricks notebook

2 -

Databricks Notebooks

4 -

Databricks Platform

2 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Repo

1 -

Databricks Runtime

1 -

Databricks Serverless

2 -

Databricks SQL

5 -

Databricks SQL Alerts

1 -

Databricks SQL Warehouse

1 -

Databricks Terraform

1 -

Databricks UI

1 -

Databricks Unity Catalog

4 -

Databricks User Group

1 -

Databricks Workflow

2 -

Databricks Workflows

2 -

Databricks workspace

3 -

Databricks-connect

1 -

databricks_cluster_policy

1 -

DatabricksJobCluster

1 -

DataCleanroom

1 -

DataDays

1 -

Datagrip

1 -

DataMasking

2 -

DataVersioning

1 -

dbdemos

2 -

DBFS

1 -

DBRuntime

1 -

DBSQL

1 -

DDL

1 -

Dear Community

1 -

deduplication

1 -

Delt Lake

1 -

Delta Live Pipeline

3 -

Delta Live Table

5 -

Delta Live Table Pipeline

5 -

Delta Live Table Pipelines

4 -

Delta Live Tables

7 -

Delta Sharing

2 -

Delta Time Travel

1 -

deltaSharing

1 -

Deny assignment

1 -

Development

1 -

DevOps

1 -

DLT

10 -

DLT Pipeline

7 -

DLT Pipelines

5 -

Dolly

1 -

Download files

1 -

DQX

1 -

Dynamic Variables

1 -

Engineering With Databricks

1 -

env

1 -

ETL Pipelines

1 -

Event Driven

1 -

External Sources

1 -

External Storage

2 -

FAQ for Databricks Learning Festival

2 -

Feature Store

2 -

File Trigger

1 -

Filenotfoundexception

1 -

Free Edition

1 -

Free trial

1 -

friendsofcommunity

1 -

GCP Databricks

1 -

GenAI

2 -

GenAI and LLMs

1 -

GenAI Course Material

1 -

Getting started

3 -

Google Bigquery

1 -

HIPAA

1 -

Hubert Dudek

2 -

import

2 -

Integration

1 -

JDBC Connections

1 -

JDBC Connector

1 -

Job Task

1 -

JSON Object

1 -

LakeflowDesigner

1 -

Learning

2 -

Lineage

1 -

LLM

1 -

Login

1 -

Login Account

1 -

Machine Learning

3 -

MachineLearning

1 -

Materialized Tables

2 -

Medallion Architecture

1 -

meetup

2 -

Metadata

1 -

Migration

1 -

ML Model

2 -

MlFlow

2 -

Model

1 -

Model Serving

1 -

Model Training

1 -

Module

1 -

Monitoring

1 -

Networking

2 -

Notebook

1 -

Onboarding Trainings

1 -

OpenAI

1 -

Pandas udf

1 -

Permissions

1 -

personalcompute

1 -

Pipeline

2 -

Plotly

1 -

PostgresSQL

1 -

Pricing

1 -

provisioned throughput

1 -

Pyspark

1 -

Python

5 -

Python Code

1 -

Python Wheel

1 -

Quickstart

1 -

Read data

1 -

Repos Support

1 -

Reset

1 -

Rewards Store

2 -

Salesforce with Databricks

1 -

Sant

1 -

Schedule

1 -

Serverless

3 -

serving endpoint

1 -

Session

1 -

Sign Up Issues

2 -

Software Development

1 -

Spark

1 -

Spark Connect

1 -

Spark scala

1 -

sparkui

2 -

Speakers

1 -

Splunk

2 -

SQL

8 -

streamlit

1 -

Summit23

7 -

Support Tickets

1 -

Sydney

2 -

Table Download

1 -

Tags

3 -

terraform

1 -

Training

2 -

Troubleshooting

1 -

Unity Catalog

4 -

Unity Catalog Metastore

2 -

Update

1 -

user groups

2 -

Venicold

3 -

Vnet

1 -

Voucher Not Recieved

1 -

Watermark

1 -

Weekly Documentation Update

1 -

Weekly Release Notes

2 -

Women

1 -

Workflow

2 -

Workspace

3

- « Previous

- Next »

| User | Count |

|---|---|

| 144 | |

| 135 | |

| 57 | |

| 45 | |

| 42 |