- 3113 Views

- 3 replies

- 1 kudos

Unable to distribute the workload to different worker

Hello Team, I am unable to distribute the workload to databricks different worker while using the hugging face GPT2 LLM model. Jobs always use the 1 node even though we have the min and max worker node setting with 2. Appreciate if anyone can share a...

- 3113 Views

- 3 replies

- 1 kudos

- 1 kudos

Hello, We have around between 5k to 10K transcript files available in the ADLS gen 2 and we are using hugging face gpt2 model to train and server the model and expecting to pass the workload to different cluster nodes while serving the LLM model and ...

- 1 kudos

- 2723 Views

- 0 replies

- 0 kudos

using openai Api in Databricks without iterating rows

Hi to everyone,I have a delta table with a column 'comment' I would like to add a new column 'sentiment', and I would like to calculate it using openai API.I already know how to create a databricks endpoint to an external model and how to use it (us...

- 2723 Views

- 0 replies

- 0 kudos

- 6066 Views

- 3 replies

- 6 kudos

Resolved! Vector Search Index not provisioning

I am trying to deploy a Vector Search Index using both the UI and the Python VectorSearchClient. In both cases the command is successful but in the Catalog Explorer the newly created index stalls with the status 'Provisioning Index' for hours. Previo...

- 6066 Views

- 3 replies

- 6 kudos

- 6 kudos

Thanks, I didn't change anything either and now is working.

- 6 kudos

- 16364 Views

- 13 replies

- 6 kudos

Resolved! Can't Run an AutoML Experiment Because Button is Greyed Out

I am trying to run an AutoML experiment but the button stays greyed out no matter what I do. I've tried different cluster configurations, different datasets, even blew away the instance in Azure and re-created it across two different Azure accounts s...

- 16364 Views

- 13 replies

- 6 kudos

- 4703 Views

- 4 replies

- 0 kudos

How do I distribute machine learning process in my spark data frame

Hi,I'm trying to use around 5 numerical features on 3.5 million rows to train and test my model with a spark data frame.My cluster has 60 nodes available but is only using 2. How can I distribute the process or make it for efficient and faster.My cod...

- 4703 Views

- 4 replies

- 0 kudos

- 0 kudos

@mohaimen_syed - can you please try using pyspark.ml implementation of randomForestClassifier instead of sklearn and see if it works. Below is an example - https://github.com/apache/spark/blob/master/examples/src/main/python/ml/random_forest_classif...

- 0 kudos

- 1528 Views

- 1 replies

- 0 kudos

AutoMl experiment not start

Hi!Last week I was able to perform some experiments in autoML. Today even after completing all the values in the form, the start button stay greyed. (I try train with the same dataset and parameters that last week train ok, with no luck) have any con...

- 1528 Views

- 1 replies

- 0 kudos

- 0 kudos

@Hypatia - can you please detailed out the issue further? What was changed between previous good run and now when entering the details on AutoML experiment page?

- 0 kudos

- 12867 Views

- 5 replies

- 3 kudos

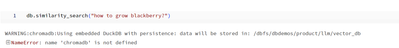

Issues running Chroma function from Dolly Demo

Hello,I'm working through the Dolly demo that was released a few days ago. There are a few lines of code that I have had to alter in order to make it work in my environment. However, I've been trying to run similarity_search() on the generated Chroma...

- 12867 Views

- 5 replies

- 3 kudos

- 3 kudos

As written in the command output when pip installing the python packages, you should then run a `dbutils.library.restartPython()`. Please try the following commands:%pip install -U transformers langchain chromadb accelerate bitsandbytesdbutils.librar...

- 3 kudos

- 2606 Views

- 1 replies

- 3 kudos

Resolved! Not possible to start AutoML experiment because start button not clickable

Hey everyone,we were trying to start a new AutoML experiment but the 'Start AutoML' button won't activate. We've also noticed that the number of selected trainingset columns does'nt change even if some columns are unselected.Has anybody encountered t...

- 2606 Views

- 1 replies

- 3 kudos

- 3 kudos

This seems to be a Databricks-wide problem. Please see related ticket on https://community.databricks.com/t5/machine-learning/can-t-run-an-automl-experiment-because-button-is-greyed-out/td-p/57388

- 3 kudos

- 3631 Views

- 1 replies

- 0 kudos

inference table not working

Hi,I'm trying to enable inference table for my llama_2_7b_hf serving endpoint, however I'm getting the following error:"Inference tables are currently not available with accelerated inference." Anyone one have an idea on how to overcome this issue? C...

- 3631 Views

- 1 replies

- 0 kudos

- 0 kudos

From the information you provided, it seems like you are trying to enable inference tables for an existing endpoint. However, the error message suggests that this feature may not be supported with accelerated inference.If you have previously disabled...

- 0 kudos

- 3329 Views

- 1 replies

- 0 kudos

Model Serving via Unity Catalog

Hi everyone! Has anyone successfully deployed a model saved on Unity Catalog to Model Serving? I get:Event Log: Served model creation failed for served model 'model', config version 15. Error message: Container creation failed. Please see build logs ...

- 3329 Views

- 1 replies

- 0 kudos

- 6936 Views

- 3 replies

- 5 kudos

Resolved! Access denied error to S3 bucket while running Kinesis spark streaming.

I get this below error while trying to simulate kinesis streams as mentioned in Databricks documentation at https://docs.databricks.com/getting-started/streaming.htmlError:java.nio.file.AccessDeniedException:Amazon S3; Status Code: 403; Error Code: A...

- 6936 Views

- 3 replies

- 5 kudos

- 5 kudos

If you do spark.sparkContext._jsc.hadoopConfiguration().set("fs.s3a.access.key", AWS_ACCESS_KEY_ID) + secret with any other secret that has less access than your default one this sometimes happens, so running those commands but with your normal secre...

- 5 kudos

- 2758 Views

- 1 replies

- 1 kudos

DLT UC for ML use cases

Hi Team,we have use case to run ML use case using DLT UC, we are facing library issues Error: INVALID ARGUMENT: no module named ‘importlib_metadata’we installed them manually by passing import in notebooks, but we have lot of missing libraries and we...

- 2758 Views

- 1 replies

- 1 kudos

- 1 kudos

@Retired_mod do we have any support of shared cluster for ML in near roadmap please

- 1 kudos

- 1581 Views

- 1 replies

- 0 kudos

Unable to create an endpoint serving for transformer model (hugginface)

Hello I am trying to create a text classification model based on this blog https://www.databricks.com/blog/rapid-nlp-development-databricks-delta-and-transformers and the notebook accelerator. I just changed the model to take a french bert but i cann...

- 1581 Views

- 1 replies

- 0 kudos

- 0 kudos

Hello Anasse, the LLM landscape has changed drastically since that blog was released in mid-2022. We have new, updated guidance which you can find here (make sure you check out the Next Steps section for the RAG Demo link as well). Additionally, if y...

- 0 kudos

- 2557 Views

- 1 replies

- 0 kudos

Custom deployment of LLM model in Databricks

Can we deploy our own Custom LLM model in Databricks? If anyone has any material or link, please share with me.

- 2557 Views

- 1 replies

- 0 kudos

- 1565 Views

- 0 replies

- 0 kudos

Save ML model in the registry fails

Using `mlflow.pyfunc.log_model` to save a model in the model registry fails with the following error: `PicklingError: Can't pickle <built-in function input>: it's not the same object as builtins.input`I've reproduced it locally and got to the conclus...

- 1565 Views

- 0 replies

- 0 kudos

-

Access control

3 -

Access Data

2 -

AccessKeyVault

1 -

ADB

2 -

Airflow

1 -

Amazon

2 -

Apache

1 -

Apache spark

3 -

APILimit

1 -

Artifacts

1 -

Audit

1 -

Autoloader

6 -

Autologging

2 -

Automation

2 -

Automl

44 -

Aws databricks

1 -

AWSSagemaker

1 -

Azure

32 -

Azure active directory

1 -

Azure blob storage

2 -

Azure data lake

1 -

Azure Data Lake Storage

3 -

Azure data lake store

1 -

Azure databricks

32 -

Azure event hub

1 -

Azure key vault

1 -

Azure sql database

1 -

Azure Storage

2 -

Azure synapse

1 -

Azure Unity Catalog

1 -

Azure vm

1 -

AzureML

2 -

Bar

1 -

Beta

1 -

Better Way

1 -

BI Integrations

1 -

BI Tool

1 -

Billing and Cost Management

1 -

Blob

1 -

Blog

1 -

Blog Post

1 -

Broadcast variable

1 -

Business Intelligence

1 -

CatalogDDL

1 -

Centralized Model Registry

1 -

Certification

2 -

Certification Badge

1 -

Change

1 -

Change Logs

1 -

Check

2 -

Classification Model

1 -

Cloud Storage

1 -

Cluster

10 -

Cluster policy

1 -

Cluster Start

1 -

Cluster Termination

2 -

Clustering

1 -

ClusterMemory

1 -

CNN HOF

1 -

Column names

1 -

Community Edition

1 -

Community Edition Password

1 -

Community Members

1 -

Company Email

1 -

Condition

1 -

Config

1 -

Configure

3 -

Confluent Cloud

1 -

Container

2 -

ContainerServices

1 -

Control Plane

1 -

ControlPlane

1 -

Copy

1 -

Copy into

2 -

CosmosDB

1 -

Courses

2 -

Csv files

1 -

Dashboards

1 -

Data

8 -

Data Engineer Associate

1 -

Data Engineer Certification

1 -

Data Explorer

1 -

Data Ingestion

2 -

Data Ingestion & connectivity

11 -

Data Quality

1 -

Data Quality Checks

1 -

Data Science & Engineering

2 -

databricks

5 -

Databricks Academy

3 -

Databricks Account

1 -

Databricks AutoML

9 -

Databricks Cluster

3 -

Databricks Community

5 -

Databricks community edition

4 -

Databricks connect

1 -

Databricks dbfs

1 -

Databricks Feature Store

1 -

Databricks Job

1 -

Databricks Lakehouse

1 -

Databricks Mlflow

4 -

Databricks Model

2 -

Databricks notebook

10 -

Databricks ODBC

1 -

Databricks Platform

1 -

Databricks Pyspark

1 -

Databricks Python Notebook

1 -

Databricks Runtime

9 -

Databricks SQL

8 -

Databricks SQL Permission Problems

1 -

Databricks Terraform

1 -

Databricks Training

2 -

Databricks Unity Catalog

1 -

Databricks V2

1 -

Databricks version

1 -

Databricks Workflow

2 -

Databricks Workflows

1 -

Databricks workspace

2 -

Databricks-connect

1 -

DatabricksContainer

1 -

DatabricksML

6 -

Dataframe

3 -

DataSharing

1 -

Datatype

1 -

DataVersioning

1 -

Date Column

1 -

Dateadd

1 -

DB Notebook

1 -

DB Runtime

1 -

DBFS

5 -

DBFS Rest Api

1 -

Dbt

1 -

Dbu

1 -

DDL

1 -

DDP

1 -

Dear Community

1 -

DecisionTree

1 -

Deep learning

4 -

Default Location

1 -

Delete

1 -

Delt Lake

4 -

Delta lake table

1 -

Delta Live

1 -

Delta Live Tables

6 -

Delta log

1 -

Delta Sharing

3 -

Delta-lake

1 -

Deploy

1 -

DESC

1 -

Details

1 -

Dev

1 -

Devops

1 -

Df

1 -

Different Notebook

1 -

Different Parameters

1 -

DimensionTables

1 -

Directory

3 -

Disable

1 -

Distribution

1 -

DLT

6 -

DLT Pipeline

3 -

Dolly

5 -

Dolly Demo

2 -

Download

2 -

EC2

1 -

Emr

2 -

Ensemble Models

1 -

Environment Variable

1 -

Epoch

1 -

Error handling

1 -

Error log

2 -

Eventhub

1 -

Example

1 -

Experiments

4 -

External Sources

1 -

Extract

1 -

Fact Tables

1 -

Failure

2 -

Feature Lookup

2 -

Feature Store

61 -

Feature Store API

2 -

Feature Store Table

1 -

Feature Table

6 -

Feature Tables

4 -

Features

2 -

FeatureStore

2 -

File Path

2 -

File Size

1 -

Fine Tune Spark Jobs

1 -

Forecasting

2 -

Forgot Password

2 -

Garbage Collection

1 -

Garbage Collection Optimization

1 -

Github

2 -

Github actions

2 -

Github Repo

2 -

Gitlab

1 -

GKE

1 -

Global Init Script

1 -

Global init scripts

4 -

Governance

1 -

Hi

1 -

Horovod

1 -

Html

1 -

Hyperopt

4 -

Hyperparameter Tuning

2 -

Iam

1 -

Image

3 -

Image Data

1 -

Inference Setup Error

1 -

INFORMATION

1 -

Input

1 -

Insert

1 -

Instance Profile

1 -

Int

2 -

Interactive cluster

1 -

Internal error

1 -

Invalid Type Code

1 -

IP

1 -

Ipython

1 -

Ipywidgets

1 -

JDBC Connections

1 -

Jira

1 -

Job

4 -

Job Parameters

1 -

Job Runs

1 -

Join

1 -

Jsonfile

1 -

Kafka consumer

1 -

Key Management

1 -

Kinesis

1 -

Lakehouse

1 -

Large Datasets

1 -

Latest Version

1 -

Learning

1 -

Limit

3 -

LLM

3 -

LLMs

3 -

Local computer

1 -

Local Machine

1 -

Log Model

2 -

Logging

1 -

Login

1 -

Logs

1 -

Long Time

2 -

Low Latency APIs

2 -

LTS ML

3 -

Machine

3 -

Machine Learning

24 -

Machine Learning Associate

1 -

Managed Table

1 -

Max Retries

1 -

Maximum Number

1 -

Medallion Architecture

1 -

Memory

3 -

Metadata

1 -

Metrics

3 -

Microsoft azure

1 -

ML Lifecycle

4 -

ML Model

4 -

ML Practioner

3 -

ML Runtime

1 -

MlFlow

75 -

MLflow API

5 -

MLflow Artifacts

2 -

MLflow Experiment

6 -

MLflow Experiments

3 -

Mlflow Model

10 -

Mlflow registry

3 -

Mlflow Run

1 -

Mlflow Server

5 -

MLFlow Tracking Server

3 -

MLModels

2 -

Model Deployment

4 -

Model Lifecycle

6 -

Model Loading

2 -

Model Monitoring

1 -

Model registry

5 -

Model Serving

27 -

Model Serving Cluster

2 -

Model Serving REST API

6 -

Model Training

2 -

Model Tuning

1 -

Models

8 -

Module

3 -

Modulenotfounderror

1 -

MongoDB

1 -

Mount Point

1 -

Mounts

1 -

Multi

1 -

Multiline

1 -

Multiple users

1 -

Nested

1 -

New Feature

1 -

New Features

1 -

New Workspace

1 -

Nlp

3 -

Note

1 -

Notebook

6 -

Notification

2 -

Object

3 -

Onboarding

1 -

Online Feature Store Table

1 -

OOM Error

1 -

Open Source MLflow

4 -

Optimization

2 -

Optimize Command

1 -

OSS

3 -

Overwatch

1 -

Overwrite

2 -

Packages

2 -

Pandas udf

4 -

Pandas_udf

1 -

Parallel

1 -

Parallel processing

1 -

Parallel Runs

1 -

Parallelism

1 -

Parameter

2 -

PARAMETER VALUE

2 -

Partner Academy

1 -

Pending State

2 -

Performance Tuning

1 -

Photon Engine

1 -

Pickle

1 -

Pickle Files

2 -

Pip

2 -

Points

1 -

Possible

1 -

Postgres

1 -

Pricing

2 -

Primary Key

1 -

Primary Key Constraint

1 -

Progress bar

2 -

Proven Practices

2 -

Public

2 -

Pymc3 Models

2 -

PyPI

1 -

Pyspark

6 -

Python

21 -

Python API

1 -

Python Code

1 -

Python Function

3 -

Python Libraries

1 -

Python Packages

1 -

Python Project

1 -

Pytorch

3 -

Reading-excel

2 -

Redis

2 -

Region

1 -

Remote RPC Client

1 -

RESTAPI

1 -

Result

1 -

Runtime update

1 -

Sagemaker

1 -

Salesforce

1 -

SAP

1 -

Scalability

1 -

Scalable Machine

2 -

Schema evolution

1 -

Script

1 -

Search

1 -

Security

2 -

Security Exception

1 -

Self Service Notebooks

1 -

Server

1 -

Serverless

1 -

Serving

1 -

Shap

2 -

Size

1 -

Sklearn

1 -

Slow

1 -

Small Scale Experimentation

1 -

Source Table

1 -

Spark config

1 -

Spark connector

1 -

Spark Error

1 -

Spark MLlib

2 -

Spark Pandas Api

1 -

Spark ui

1 -

Spark Version

2 -

Spark-submit

1 -

SparkML Models

2 -

Sparknlp

3 -

Spot

1 -

SQL

19 -

SQL Editor

1 -

SQL Queries

1 -

SQL Visualizations

1 -

Stage failure

2 -

Storage

3 -

Stream

2 -

Stream Data

1 -

Structtype

1 -

Structured streaming

2 -

Study Material

1 -

Summit23

2 -

Support

1 -

Support Team

1 -

Synapse

1 -

Synapse ML

1 -

Table

4 -

Table access control

1 -

Tableau

1 -

Task

1 -

Temporary View

1 -

Tensor flow

1 -

Test

1 -

Timeseries

1 -

Timestamps

1 -

TODAY

1 -

Training

6 -

Transaction Log

1 -

Trying

1 -

Tuning

2 -

UAT

1 -

Ui

1 -

Unexpected Error

1 -

Unity Catalog

12 -

Use Case

2 -

Use cases

1 -

Uuid

1 -

Validate ML Model

2 -

Values

1 -

Variable

1 -

Vector

1 -

Versioncontrol

1 -

Visualization

2 -

Web App Azure Databricks

1 -

Weekly Release Notes

2 -

Whl

1 -

Worker Nodes

1 -

Workflow

2 -

Workflow Jobs

1 -

Workspace

2 -

Write

1 -

Writing

1 -

Z-ordering

1 -

Zorder

1

- « Previous

- Next »

| User | Count |

|---|---|

| 90 | |

| 40 | |

| 38 | |

| 28 | |

| 25 |