- 1016 Views

- 0 replies

- 0 kudos

Hi Everyone ,My Business requirement is to schedule single job from 1st to 10th of the month on 12 AM , 3AM , 12PM , 4PM , 8PM and from 10th to Month End 1AM , 12PM , 4PM , 8PM right now we have created 2 schedular to meet the requirement and using c...

- 1016 Views

- 0 replies

- 0 kudos

- 3251 Views

- 3 replies

- 0 kudos

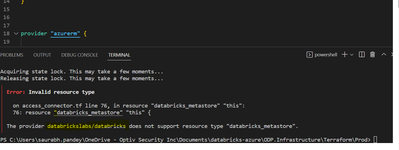

I am currently taking the Data Engineering with Databricks course and have run into an error. I have also attempted this with my own data and had a similar error. In the lab, we are using autoloader to read a spark stream of csv files saved in the DB...

- 3251 Views

- 3 replies

- 0 kudos

Latest Reply

As a small aside, you don't need the third argument in the structfields

2 More Replies