- 2216 Views

- 1 replies

- 0 kudos

I have a cluster pool with max capacity. I run multiple jobs against that cluster pool.Can on-demand clusters, created within this cluster pool, be shared across multiple different jobs, at the same time?The reason I'm asking is I can see a downgrade...

- 2216 Views

- 1 replies

- 0 kudos

- 2248 Views

- 0 replies

- 0 kudos

Hello,I am working on a Spark job where I'm reading several tables from PostgreSQL into DataFrames as follows: df = (spark.read

.format("postgresql")

.option("query", query)

.option("host", database_host)

.option("port...

- 2248 Views

- 0 replies

- 0 kudos

- 1351 Views

- 0 replies

- 0 kudos

The chunk of code in questionsys.path.append(

spark.conf.get("util_path", "/Workspace/Repos/Production/loch-ness/utils/")

)

from broker_utils import extract_day_with_suffix, proper_case_address_udf, proper_case_last_name_first_udf, proper_case_ud...

- 1351 Views

- 0 replies

- 0 kudos

- 2676 Views

- 0 replies

- 0 kudos

I have Data Engineering Pipeline workload that run on Databricks.Job cluster has following configuration :- Worker i3.4xlarge with 122 GB memory and 16 coresDriver i3.4xlarge with 122 GB memory and 16 cores ,Min Worker -4 and Max Worker 8 We noticed...

- 2676 Views

- 0 replies

- 0 kudos

- 10685 Views

- 3 replies

- 1 kudos

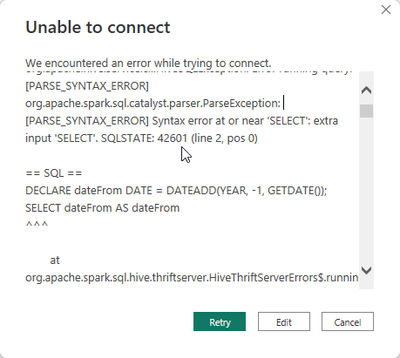

Hi there,I am new to Spark SQL and would like to know if it possible to reproduce the below T-SQL query in Databricks. This is a sample query, but I want to determine if a query needs to be executed or not. DECLARE

@VariableA AS INT

, @Vari...

- 10685 Views

- 3 replies

- 1 kudos

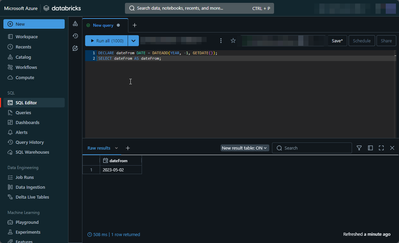

Latest Reply

Since you are looking for a single value back, you can use the CASE function to achieve what you need.%sqlSET var.myvarA = (SELECT 6);SET var.myvarB = (SELECT 7);SELECT CASE WHEN ${var.myvarA} = ${var.myvarB} THEN 'Equal' ELSE 'Not equal' END AS resu...

2 More Replies