Apply Changes Statistics

Hi Team,I just wanted to know why APPLY_CHANGES do not show how many rows it got upserted in the graph? Is this something in the works in the future?Cheers,G

- 6655 Views

- 0 replies

- 1 kudos

Hi Team,I just wanted to know why APPLY_CHANGES do not show how many rows it got upserted in the graph? Is this something in the works in the future?Cheers,G

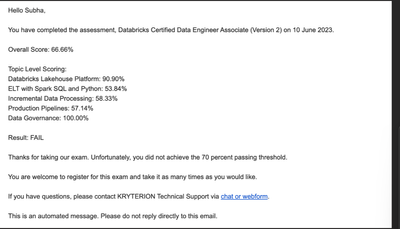

Hi Team,I gave the Databricks Certified Data Engineer Associate (Version 2) on 10th June, 2023. I got 66.66% and I intend to retake it. Is it possible to get a voucher for the retest. That would be a great encouragement and help. I would really appre...

Hi Databricks Team,I set for Databricks Certified Machine Learning Professional exam for 2nd time, but didn't pass again. Got 66.66% overall.I am seasoned databricks user but this particular exam is quite unorthodox one. Nevertheless I know I can be ...

I'm following this post to run an init script to install gdal.https://community.databricks.com/t5/get-started-discussions/gdal-on-databricks-cluster-runtime-12-2-lts/m-p/39091#M662My script is simply:--------------#!/bin/bashapt-get update && apt-get...

I created an external table and set the location on other AWS Account (External Location), lets call it Account B. Databricks workspace is deployed in other account. Lets call it Account A.Here is my ScriptCREATE TABLE devtest.governance.dbtable1(col...

I think you should start checking from our cross account role access.https://docs.databricks.com/en/administration-guide/account-settings-e2/audit-aws-cross-account.html#step-3-configure-cross-account-support-optional Check if this is well placed.

Hello,We are migrating to Unity Catalog (UC), and for very few of our tables, we get the below error when trying to write or even display them. We are using UC enabled clusters, usually with runtime versions 12.2 LTS. The below error, when it happens...

Hello, Thanks for contacting Databricks Support. The error message indicates a problem with the configuration key fs.azure.account.key. This configuration key is used to provide the access key for the Azure Data Lake Storage account. Not sure if th...

Hello,I am having a pretty major problem with the Databricks Workflows UI- when I look at the list of jobs, the "Last Run" column does not have any data in it. This is kind of a big problem because now I don't have a good way of getting visibility in...

Hi,i would not mind small advice, i do have dlt cdc typ2, the definition dlt.create_streaming_table('`my_table_dlt_cdc`') dlt.apply_changes( target = 'my_table_dlt_cdc', source = 'source', keys = ['id'], sequence_by = col('snapshot_date'), ...

For anyone who sees this post in the future. I was missing one argumentapply_as_deletes

yesterday all of my notebooks seemingly changed to have python formatting (which seems to be in this week's release), but the unintended consequence is that shift + tab (which used to show docstrings in python) now just un-indents code, and tab inser...

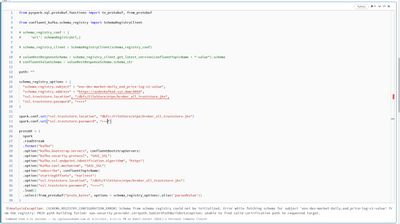

Hi,I am unable to connect to secure schema registry(running on https) as it is breaking with below mentioned error.SCHEMA_REGISTRY_CONFIGURATION_ERROR] Schema from schema registry could not be initialized. Error while fetching schema for subject 'env...

HiI'm trying to load historical data with the DLT workflow and facing a Out of Memory or Executor heartbeat lost error. The historical data loads fine with normal processing, but fails within DLT workflow. Tried repartitioning and scaling cluster, bu...

Hello Databricks Community, I asked the same question on the Get Started Discussion page but feels like here is the right place for this question. I'm reaching out with a query regarding access control in the hive_metastore. I've encountered behavior...

That is expected. The single user mode is the legacy standard + UC ACL enabled. https://docs.databricks.com/en/archive/compute/cluster-ui-preview.html#how-does-backward-compatibility-work-with-these-changes For your case, you need the hive table acl ...

Hi AllI am trying to insert DF into Synapse table. I need to insert string type columns in DF into Nvarchar fields in Synapse table. I am getting the error ' data type that cannot participate in a columnstore index Error' Can someone guide on the i...

import com.microsoft.azure.sqldb.spark.config.Configimport com.microsoft.azure.sqldb.spark.connect._import com.microsoft.azure.sqldb.spark.query._val query = "Truncate table tablename"val config = Config(Map( "url" -> dbutils.secrets.get(scope = ...

@Someswara Durga Prasad Yaralgadda :The NoClassDefFoundError error occurs when a class that was available during the compile time is not available at the runtime. This could be due to a few reasons, including a missing dependency or an incompatible ...

Unable to create workspace after deleting existing one without deleting clusters and other resources.

I created a notebook that uses Autoloader to load data from storage and append it to a bronze table in the first cell, this works fine and Autoloader picks up new data when it arrives (the notebook is ran using a Job).In the same notebook, a few cell...

Thanks @Retired_mod, in a case where it's not possible or not practical to implement a pipeline with DLTs, what would be that "retry mechanism" based on ? I.e., is there an API other that the table history that can be leveraged to retry until "it wo...

| User | Count |

|---|---|

| 1644 | |

| 793 | |

| 572 | |

| 349 | |

| 287 |