- 9221 Views

- 5 replies

- 0 kudos

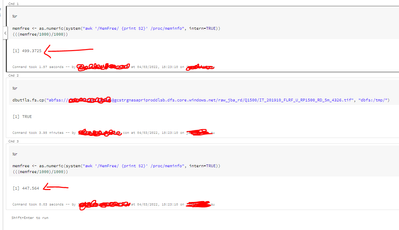

i am using dbutils.fs.copy(abfss://container/provsn/filen[ame.txt,abfss://container/data/sasam.txt)while.trying this copy method to copy the files it is showing urisyntax exception near the square bracket how can i read and copy it

- 9221 Views

- 5 replies

- 0 kudos

Latest Reply

From looking at stack trace, it looks like URIException. Easiest solution would be renaming the file so that there are no square brackets in the filename. If this is not an option, it might help to URLEncode the path - https://stackoverflow.com/que...

4 More Replies

- 6768 Views

- 3 replies

- 2 kudos

I have created a Databricks workflow job with notebooks as individual tasks sequentially linked. I assign a value to a variable in one notebook task (ex: batchid = int(time.time()). Now, I want to pass this batchid variable to next notebook task.What...

- 6768 Views

- 3 replies

- 2 kudos

Latest Reply

@brickster You would use dbutils.jobs.taskValues.set() and dbutils.jobs.taskValues.get().See docs for more details: https://docs.databricks.com/workflows/jobs/share-task-context.html

2 More Replies